Or try one of the following: 詹姆斯.com, adult swim, Afterdawn, Ajaxian, Andy Budd, Ask a Ninja, AtomEnabled.org, BBC News, BBC Arabic, BBC China, BBC Russia, Brent Simmons, Channel Frederator, CNN, Digg, Diggnation, Flickr, Google News, Google Video, Harvard Law, Hebrew Language, InfoWorld, iTunes, Japanese Language, Korean Language, mir.aculo.us, Movie Trailers, Newspond, Nick Bradbury, OK/Cancel, OS News, Phil Ringnalda, Photoshop Videocast, reddit, Romanian Language, Russian Language, Ryan Parman, Traditional Chinese Language, Technorati, Tim Bray, TUAW, TVgasm, UNEASYsilence, Web 2.0 Show, Windows Vista Blog, XKCD, Yahoo! News, You Tube, Zeldman

Building AI apps and agents with Microsoft Foundry | InfoWorld

Technology insight for the enterpriseNo, AI won’t destroy software development jobs 6 May 2026, 2:00 am

I’m not even remotely worried about AI eliminating software development jobs. In fact, I’m pretty sure there will soon be a boom in both software development jobs and the amount of software available to everyone.

People have always worried about automation causing massive unemployment. Each time a breakthrough happens, folks are sure that “it will be different this time.” Only it never is different.

But the worriers persist.

It’s paradoxical

You can tell them all about the Jevons paradox — the observation that as something becomes more efficient, demand for that more efficient thing increases rather than decreases. In the mid-19th century, William Jevons noticed that the use of coal became more efficient. Humans figured out how to get more heat and energy out of less and less coal. The common belief was that, because less coal was needed for the same amount of energy or heat, there would be less demand for coal as a result. Everyone was concerned that coal miners would lose their jobs. But Jevons noticed that demand for coal actually went up, as the more efficient processes led to more widespread uses for coal.

The same thing happened half a century earlier with the introduction of the automated loom. Despite fears that the power loom would destroy jobs for weavers, it made the production of clothing and other textile products cheaper, increasing demand for such products and increased employment in the textile industry.

This phenomenon can be seen over and over again. Spinning jennies, automobiles, computers, robotic manufacturing, tractors, sewing machines, and countless other inventions all caused widespread fears of job loss, but the fears were never really realized. When a company can suddenly produce 10 times more with the people they have, they have always wanted to produce 10 times more, not cut their workforce by 90%. Yet here we are, with everyone sure that AI is going to put us all out of work.

It’s not going to happen — especially in the software development realm. You know what is going to happen? The same thing that always happens. That which is automated and made more efficient will find new and different ways to express itself. Existing software will suddenly be vastly more useful as the backlog of features can be implemented. New software ideas that were previously too complex for humans to write and manage will be created.

Marc Andreessen was never so right as when he said that “software is eating the world.” Sure, software was eating a lot when humans wrote every line of code. But now that code can be written 10 or 100 times faster, software’s appetite will go from hungry to ravenous. The work that can be done has expanded rapidly. And that work will be done because there is too much money in building what we have always wanted but that humans alone could not deliver.

A positive-sum game

The world is never a zero-sum game, but humans seem hard-wired to view the world that way. Only now, with AI, we have what Daniel Jefferies delightfully calls “Fear Mongering as a Service,” running rife through our industry. Yet while all the Chicken Littles decry the job market falling out of the sky, job postings continue to actually increase, and it is becoming harder to fill those jobs.

Now that doesn’t mean the market isn’t shifting. The demand is strong for experienced engineers and weaker for entry-level jobs, a situation that is creating a bit of a paradox all by itself. The skills that worked for many years may not be as valuable going forward. Writing good code and getting an AI agent to write good code are two different but related skills.

Now, I recognize that the debate on this matter is strong and that there are many folks who will take the opposing side. Some will argue that software development shops overhired during Covid and that the resulting adjustments are going to put a damper on things. Some argue that the increase in job postings is merely a scam, with AI generating many of the new postings, and that the increase in job openings is a fraud. Could be. But it doesn’t matter.

So go ahead and panic if you want — update your résumé, run around flapping your arms, and cry that the sky is falling. Me? I’ve seen the PC “destroy” mainframe jobs, the internet “destroy” off-the-shelf software, open source “destroy” commercial software, and offshoring “destroy” the American programming market. Things are going pretty well considering all this “destruction.” I can’t wait for AI to “destroy” our current developer market.

Designing front-end systems for cloud failure 6 May 2026, 2:00 am

Modern frontend applications rely on cloud services for far more than basic data fetching. Authentication, search, file uploads, feature flags, notifications and analytics often depend on APIs and managed services running behind the scenes. Because of that, frontend reliability is closely tied to cloud reliability, even when the frontend team does not directly own the infrastructure.

This is often one of the biggest mindsets shifts for frontend engineers. We often think about failure as a total outage where the whole site is down. In practice, that is not what most users experience. More often, the interface is partially degraded: A dashboard loads but one panel is empty, a form saves but the confirmation never arrives, or a file upload stalls while the rest of the page still appears normal.

That is why I think frontend resilience deserves more attention in day-to-day engineering conversations. The goal is not to prevent every cloud issue. That is rarely realistic. The more practical goal is to build interfaces that stay usable, calm and understandable when cloud services or other dependencies hiccup. Reliability guidance from major cloud platforms is useful here because it frames reliability as the ability of a workload to perform correctly and recover from failure over time, not just remain available in ideal conditions. Those reliability design principles offer a broader cloud perspective that can inform frontend decisions.

Why cloud failures matter to frontend engineers

Cloud platforms are designed for scale and availability, but they still depend on many moving parts. Requests can fail because of temporary network instability, slow downstream services, expired credentials, rate limiting or short-lived infrastructure problems. Sometimes the issue is not in the primary API at all. It can be in storage, identity, messaging or another supporting service that the user never sees directly.

From a frontend perspective, the important lesson is that failures are often partial, not absolute. A product list may load correctly while recommendations fail. Login may work while user preferences do not. Search may return results, but analytics events may silently drop. When teams assume every dependency either succeeds together or fails together, they tend to create brittle interfaces that turn one bad response into a blank screen.

Resilient frontend systems often start with a simpler question: What is the minimum useful version of this screen if one dependency is unavailable? That question changes how you design loading states, component boundaries and recovery behavior. It also encourages a more honest relationship between frontend and backend teams, because the frontend is designed for real operating conditions instead of perfect demos.

Designing for graceful degradation in real products

One practical reliability habit in frontend systems is separating critical features from non-critical ones. Critical features are the parts users need to complete their main task. Non-critical features add richness, context or convenience, but the product can still provide value without them for a short period. On an account page, profile details and security settings may be critical. A recent activity panel or personalized recommendations may be useful, but not essential in the moment.

That distinction helps teams decide where to invest in stronger fallback behavior. If a non-critical feature fails, the interface can hide the section, show cached data or swap in a simpler default state. If a critical feature fails, the user needs a much clearer recovery path. That might mean preserving unsaved input, offering a visible retry action or falling back to a server-confirmed state instead of leaving the UI in limbo.

Retries are part of that picture, but they need to be used carefully. Common cloud reliability guidance emphasizes controlled retries, exponential backoff and jitter rather than aggressive repeated requests. That matches what I have seen from the frontend side as well. Retrying a read request after a short delay can smooth out transient failures. Retrying a write action without safeguards can create duplicate submissions, conflicting state or user confusion. A frontend should treat retries as a deliberate recovery tool, not a reflex.

The user experience matters just as much as the retry policy. If the application is attempting recovery in the background, the interface should say so. Endless spinners are rarely reassuring. Clear language such as “Still trying to load your recent activity” or “We’re retrying your request” makes the system feel more transparent. It also gives users a reason to wait instead of assuming the product is frozen.

This is also where partial rendering becomes powerful. Interfaces are often more resilient when they isolate failures instead of spreading them. If one widget fails, the rest of the dashboard should still render. If one secondary API is unavailable, the page should still load primary content. A resilient frontend should not require every backend dependency to succeed perfectly before it shows something useful. That design choice often matters more than any individual recovery tactic.

What resilient failure states look like in practice

Good failure handling is not only technical. It is also a communication problem. When users encounter an issue, they need to know what failed, what still works and what they can do next. Generic messages like “Something went wrong” usually fail on all three counts. They are vague, they do not reduce anxiety and they do not support recovery.

A better message is specific without becoming overly technical. For example: “We couldn’t load your recent activity right now. Your account details are still available. Please try again in a few minutes.” That kind of message reassures the user that the whole product is not broken and gives them a practical next step. It also reflects a more mature product mindset: Failures should be contained, explained and recoverable.

One area where this matters a lot is form-heavy workflows. Frontend systems can lose user trust quickly when a submission fails and the user loses everything they typed. Preserving user input should be a baseline expectation for critical flows. Even basic browser capabilities and web APIs can support better failure handling here. For example, the Fetch API and AbortController give frontend teams a cleaner way to manage request lifecycles, cancel stale requests and avoid leaving the interface stuck in outdated loading states. These are small implementation details, but they often shape whether the product feels reliable under stress.

The same principle applies to fallback data. In some cases, showing cached or last-known information is more helpful than showing nothing at all. In others, it is better to hide a non-essential section until the dependency recovers. There is no single universal pattern. What matters is choosing a failure state that matches user intent. If the user is trying to complete a task, support task completion. If the user needs context, preserve as much trustworthy context as possible.

Cloud failures will continue to happen, even in mature environments. For frontend engineers, resilience is less about dramatic disaster handling and more about small design decisions made early: Isolating failures, protecting user work, controlling retries, rendering partial content and writing clearer recovery messages. When those decisions are made well, users may never know what failed behind the scenes. They only notice that the application remained usable, understandable and calm under pressure.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?

Building AI apps and agents with Microsoft Foundry 6 May 2026, 2:00 am

At first glance, Microsoft Foundry looks like a big grab bag of every AI-adjacent service that Microsoft has offered in the last decade, plus some new ones. In Microsoft’s own words, “Foundry consolidates several previous Azure AI services and tools into a unified platform” and “unifies agents, models, and tools under a single management grouping.”

Microsoft Foundry helps application developers to build and deploy agents, which may use models and tools. It also helps machine learning (ML) engineers and data scientists to fine-tune models, run evaluations, and manage model deployments. Finally, it helps IT administrators and platform engineers to govern AI resources, enforce policies, and manage access across teams. It isn’t quite a floor wax and a dessert topping, but it does try to serve three distinct audiences.

Key capabilities of Microsoft Foundry for building agents include multi-agent orchestration, workflows, a tool catalog, memory, knowledge integration, and publishing. Key capabilities for operation and governance include real-time observability, centralized AI asset management, and enterprise controls.

Microsoft Foundry competes directly with the Google Cloud Agent Development Kit (ADK), Amazon Bedrock AgentCore, and Databricks Agent Bricks. Additional competitors include the OpenAI Agents SDK, LangChain/LangGraph, CrewAI, and SmythOS.

Microsoft Foundry Agent Service

The Microsoft Foundry Agent Service is a helpful platform that guides you through the development, deployment, and scaling of AI agents. These agents use large language models (LLMs) to handle tricky requests, connect with other tools, and do tasks on their own.

The service groups agents into three main types: prompt agents, which are easy to set up and great for quickly trying out ideas; workflow agents, which are visual or YAML-based tools that make automating several steps easier; and hosted agents, which are containers that let you manage your own code as well as frameworks like LangGraph.

Microsoft Foundry also has a model catalog with both new and well-known models, and a tool catalog that includes web search, memory management, and code execution.

The platform uses guardrails and controls to keep things secure, like stopping prompt injection. Plus, it supports private networks, versioning, managing the infrastructure, and full monitoring.

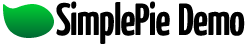

The Microsoft Foundry Agent Service accepts inputs from user messages, system events, and agent messages. The agent combines a large language model with instructions and tool calls. Tools can retrieve data, perform actions, and provide memory. Agents can send agent messages and emit structured output.

Microsoft

Microsoft Foundry Models

Microsoft Foundry Models is a collection of AI architectures, including foundational models, reasoning models, and models tailored for specific domains, brought to you by Microsoft and other companies. These models are grouped into those you can buy directly from Azure and those shared by the community. This grouping helps you figure out how much direct support Microsoft will give you and how well they’ll fit into your existing cloud setup.

Models from Microsoft come with official service level agreements and are well-integrated, while models from partners like Anthropic and Meta let you explore innovations under their own rules.

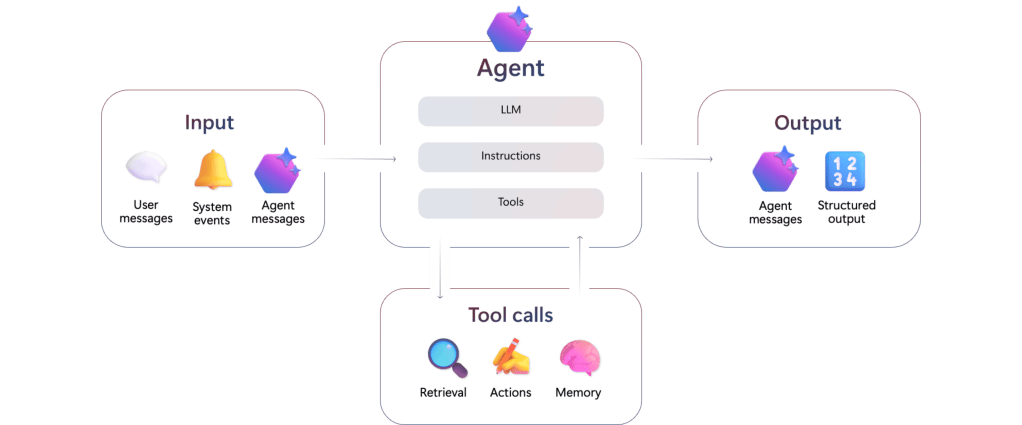

You can use the platform in two main ways: managed compute and serverless deployments. (You can check out Microsoft’s comparison table below.)

Managed compute means you get your own virtual machines where the model weights are stored, which is great for doing complex stuff like fine-tuning and keeping track of the model’s life cycle using Azure Machine Learning, but the VMs incur costs whenever they are active. Serverless deployments give you easy access to Microsoft’s models through APIs, and usually you pay based on how many tokens you use, not how much hardware you use.

To keep things safe, the platform has built-in content safety filters that watch out for anything bad, and you can (if necessary) lock down your data by turning off public network access and using private endpoints for all your hub-based project work.

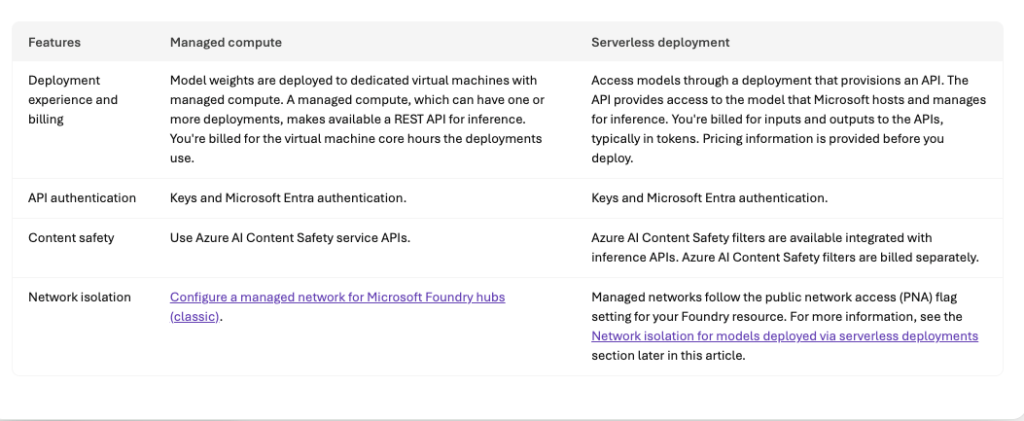

When selecting models, you may want to consult the Foundry model leaderboard (screenshot below), which is found in the Discover/Models tab of “new” Foundry.

Comparison of managed compute and serverless deployment options for models on Microsoft Foundry. Managed compute deployments are billed by virtual machine core hours; serverless deployments are billed by usage measured in tokens.

Foundry

Foundry model leaderboard. You’ll note that the highest-quality models are not necessarily the safest, fastest, or cheapest. You can sort this chart by any column.

Foundry

Microsoft Foundry Control Plane

The Microsoft Foundry Control Plane is essentially a dashboard that helps you keep an eye on all your AI agents, models, and tools in one place. It brings together all the admin stuff from different projects into a single view, so you can easily see how everything is doing. Plus, it lets you keep tabs on performance, costs, and compliance from just one spot.

The Control Plane breaks down the work of running things into different areas, like the Assets pane. The Assets pane keeps a list of all your AI resources, so you can find them easily and see how they’re doing. It also looks at what’s happening when they’re running and gives you a health score to spot any problems early. The Compliance pane sets up rules for the whole company using Microsoft Defender and Purview. It collects security alerts and policy violations and helps you fix them all at once to make sure everyone’s using the agents safely and following the rules.

The Admin and Quota panes keep an eye on who can do what and how much they’re using. This helps you manage costs and make sure no one’s hogging the resources. The Control Plane also keeps things running smoothly by using tools that automatically check for weaknesses, like prompt injection, and gives you tips on how to improve your prompts based on what’s happening.

Observability, evaluation, and tracing

Observability in Foundry Control Plane is a toolkit for keeping an eye on and fixing systems as they run, all while making sure the outputs are top-notch and safe. In the Microsoft Foundry world, this is divided into three main areas: checking things out, keeping tabs on them, and following their path.

First up, evaluation is like a detective’s work, where special tools look at things like how well the model fits together and if it’s safe, like checking for harmful materials or sneaky biases. You can even add your own evaluators to make sure it works for your specific needs. There are also built-in tools that give you an idea of how well it’s doing.

Then there’s production monitoring, which is like having a live camera on your apps. It connects with Azure Monitor to keep an eye on what’s happening, like how much it’s using and how slow it is, along with how good it’s doing. If something goes wrong, you get alerts so the tech team can fix it fast.

Finally, distributed tracing uses OpenTelemetry to show you exactly how your AI agents are working. This gives you a clear picture, so you can figure out tricky thinking or spot where the app is slowing down. You can use these tools from the start, checking your models, making sure everything is good before you launch, and even spotting any changes after deployment.

Developer experience

Microsoft Foundry allows you to develop agent applications in four programming languages, Python, C#, TypeScript/JavaScript, and Java. That said, the vast majority of samples and solution templates are in Python, typically with Microsoft Bison setup files for Azure. You can use Visual Studio Code or another IDE of your choice. You need project and AI permissions on Azure. You will also need the Azure CLI (az) and the Azure Developer CLI (azd) to use many of the solution templates. If you use Visual Studio Code, you’ll need the Foundry extension. In the unlikely event that you don’t already have Git installed in your environment, you should install it now, because you’ll want it to clone Foundry SDK sample repos.

If you wish, you can configure Claude Code for Microsoft Foundry. That lets you run the coding agent on Azure infrastructure while keeping your data inside your compliance boundary. In this configuration, unfortunately, you have to run Claude models through their Azure API and pay by the token, even if you have a flat-rate Claude subscription.

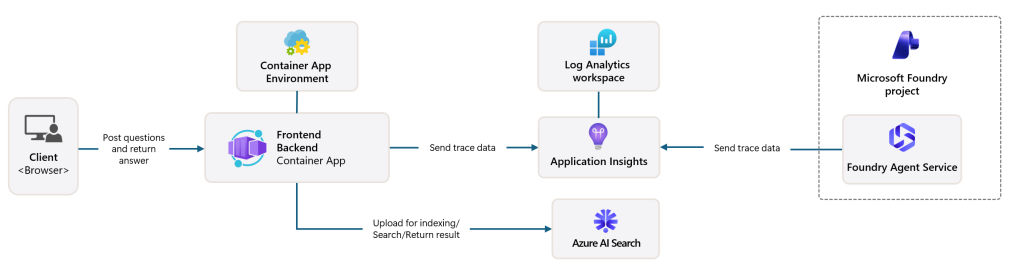

There are currently over a dozen AI templates (or 18, if you log into “new” Foundry and look at the Solution templates under Discover) available to help you get started with Microsoft Foundry. The Get Started with Chat template is a good first project. (See the architecture diagram below.)

You can use on-demand Foundry playgrounds for rapid prototyping, API exploration, and technical validation, to experiment with models, and to validate ideas. Experimenting with playgrounds is recommended prior to writing production code. There are four different playgrounds, one each for models, agents, video, and images.

LangChain is a framework for developing applications powered by language models. It enables language models to connect to sources of data, and also to interact with their environments. LangGraph extends LangChain’s capabilities for building multi-actor or agentic applications by orchestrating agents. You can combine LangChain and LangGraph with Microsoft Foundry models and other capabilities using the langchain-azure-ai Python package.

There are two kinds of Foundry agent workflows, declarative and hosted. Declarative agent workflows define predefined sequences of actions for your agents using YAML configurations rather than explicit programming logic; you can generate code from the YAML once you’ve tested it. Hosted workflows let multiple agents collaborate in sequence, each with its own model, tools, and instructions.

The Foundry MCP Server (preview) is a cloud-based version of the Model Context Protocol (MCP). It provides a collection of tools that allow your agents to interact with Foundry services by reading and writing data, all without needing to connect directly to the back-end APIs.

Fireworks AI is integrated with Microsoft Foundry on a preview basis. It allows you to use the latest open-source models and bring your own models onto Fireworks’ GPU-backed infrastructure.

This “Get started with AI chat” solution deploys a web-based chat application with AI capabilities running in Azure Container App. It uses Microsoft Foundry projects and Foundry Tools to provide intelligent chat functionality, and supports retrieval-augmented generation (RAG) using Azure AI Search. It lacks any significant security features.

Microsoft

Microsoft Foundry SDKs

Microsoft Foundry currently offers four SDKs, each implemented in four programming languages (Python, C#, TypeScript/JavaScript, and Java). When choosing the best development path for your project, select the Microsoft Foundry SDK if you are building applications that use agents, evaluations, or unique Foundry-specific features. If your priority is maintaining maximum compatibility with the OpenAI API or accessing Foundry direct models via Chat Completions, the OpenAI SDK is the better choice. For specialized tasks involving AI services such as Azure Vision, Azure Speech, or Azure Language, use the Foundry Tools SDKs. Implement the Agent Framework when your goal is to orchestrate multi-agent systems through local code.

Guardrails and Responsible AI

Implementing guardrails improves model and agent safety by detecting harmful content, enhancing user interactions, and reducing AI output risks. Microsoft Foundry currently offers guardrails that can be applied to one or many models and one or many agents in a project. As has been the case for years, the risks that are handled are categorized as hate, sexual, violence, and so on, and the severity level threshold settings for content risks range from off to high. Guardrails can be applied at four intervention points: user input, tool call, tool response, and output.

To conform with Microsoft’s Responsible AI policy, Microsoft recommends that Foundry developers discover agent quality, safety, and security risks before and after deployment; protect, at both the model output and agent runtime levels, against security risks, undesirable outputs, and unsafe actions; and govern agents through tracing and monitoring tools and compliance integrations.

Trying the Foundry Agents Playground

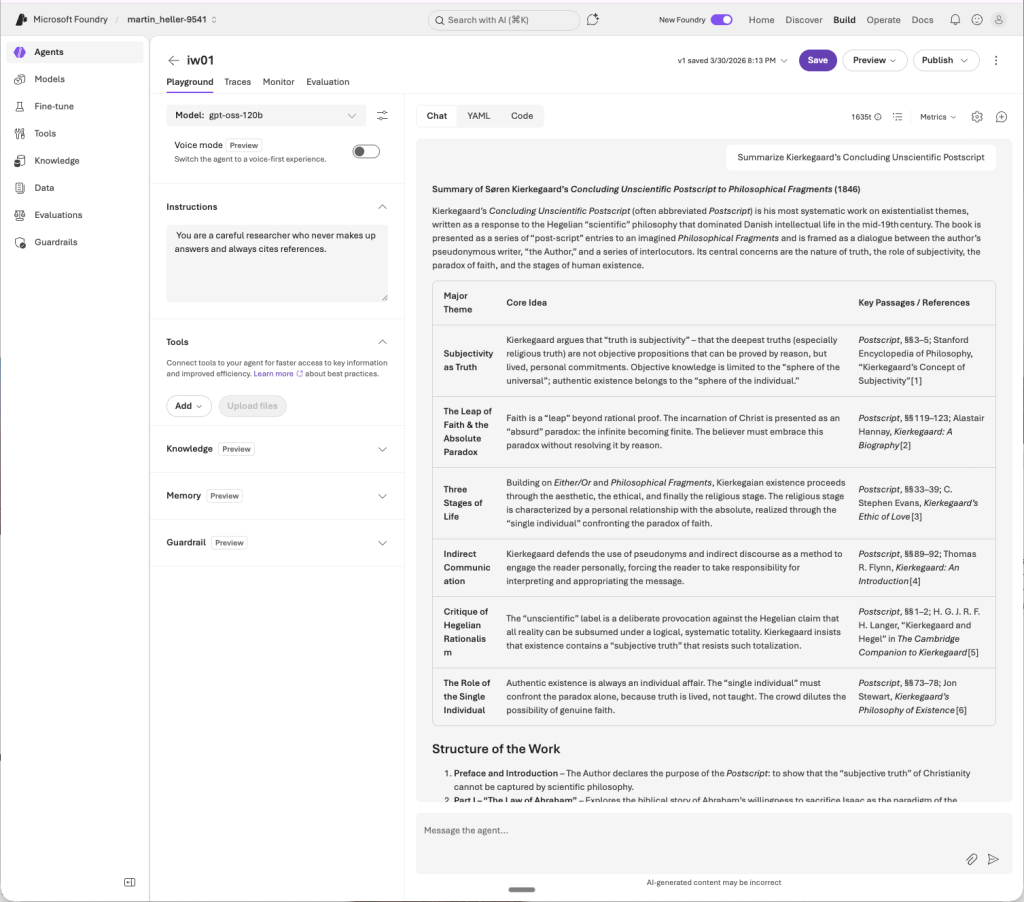

The 2024 predecessor to Microsoft Foundry was Azure AI Studio. One of the parts of AI Studio that I found most useful was the Playground, where you could find dozens of examples of effective instructions/prompt/model combinations in addition to the actual Playground for testing out your own. I wrote about this in my guide to generative AI development. The Playground has since evolved for agents, but the examples seem to have fallen by the wayside in the transition to Microsoft Foundry. The new playground is found under Build/Agents/Playground.

In the Foundry Agents Playground screenshot below, I provided the system instructions “You are a careful researcher who never makes up answers and always cites references,” and in my query asked it to summarize Kierkegaard’s massive “Concluding Unscientific Postscript,” a text I studied in college. Those system instructions tend to encourage models to stay on the straight and narrow, but don’t always prevent models from making up citations out of whole cloth. Hallucinated citations can seem legit even while being utter fabrications, as several lawyers have discovered to the detriment of their careers. If you use generative AI, you are still responsible for any answers you use, so you need to fact-check everything carefully, even if it sounds correct.

By the way, there’s a decent summary of prompt engineering techniques in the Microsoft Foundry documentation. It’s not as entertaining as the old examples, however.

The Microsoft Foundry Agents playground, found in the Build section, is a useful place to try out models, tools, guardrails, instructions, and prompts. Here I have asked the agent to summarize Kierkegaard’s “Concluding Unscientific Postscript,” with system instructions that say “You are a careful researcher who never makes up answers and always cites references,” using the open-weight mixture-of-experts (MoE) model gpt-oss-120b from OpenAI. The summary looks pretty good based on my memory of the text, although I have not checked the generated references for accuracy.

Foundry

Trying a Foundry Solution

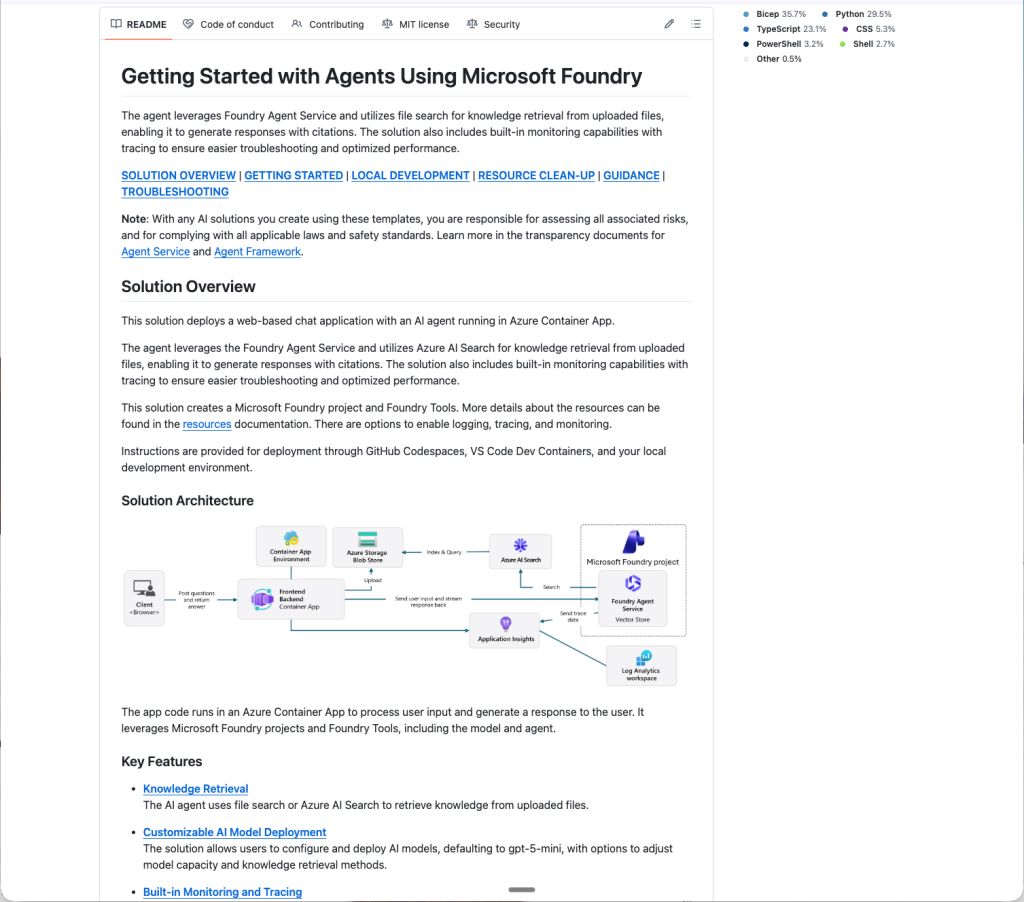

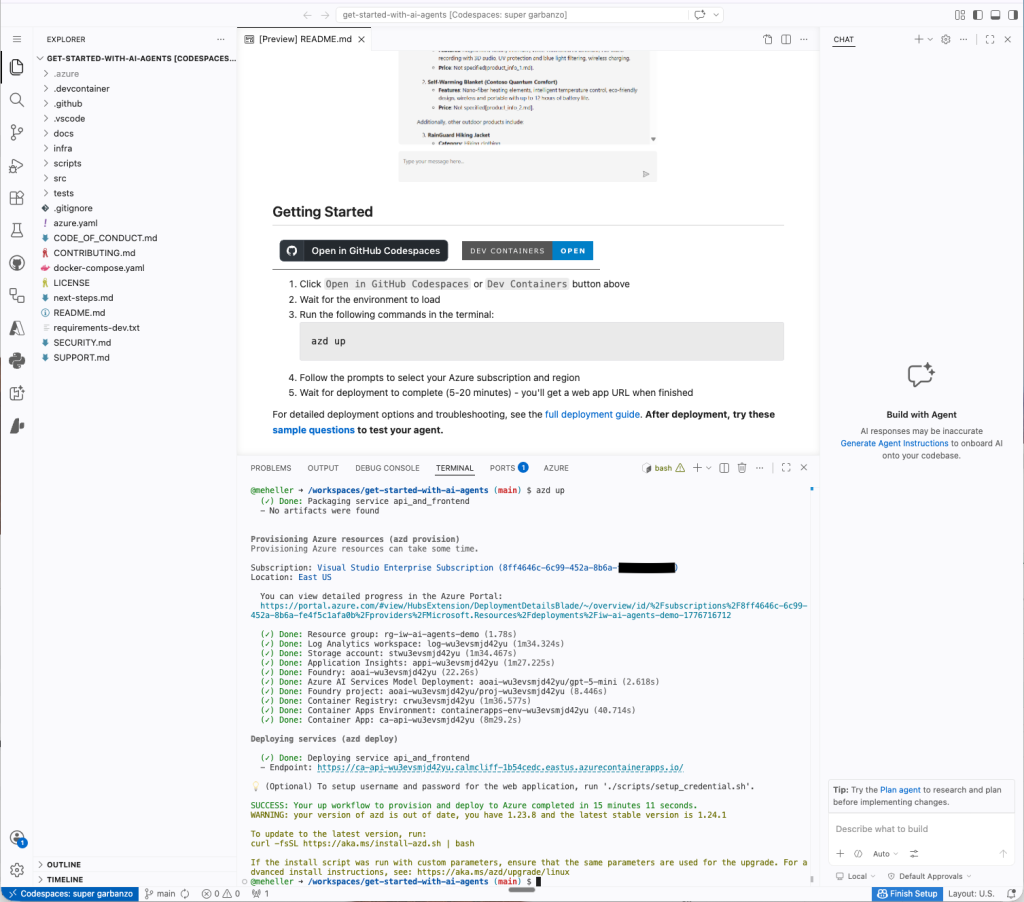

I tried one of the 18 Microsoft Foundry solution templates, “Get started with AI agents.” The entire process took me about an hour, ran almost entirely in the cloud, and cost me a whopping $0.02. That’s right, two cents. You can find the code on GitHub in the Azure-Samples repository.

The README doc for “Getting started with agents using Microsoft Foundry,” a basic sample solution for deploying AI agents and a web app with Azure AI Foundry and SDKs. Note the solution architecture diagram two-thirds of the way down.

Foundry

Starting from the GitHub repo, you can click on the “Open in GitHub Codespaces” button or the “Dev Containers” button in the Getting Started section. I used the former, which essentially opens a VM-based Visual Studio Code environment in the Azure cloud. The latter opens the VS Code environment on your local machine and connects it to a development container in the Azure cloud.

The “Getting started with agents using Microsoft Foundry” solution opened and running in GitHub Codespaces. At this point the azd up command has completed, and has supplied an end point for the web interface.

Foundry

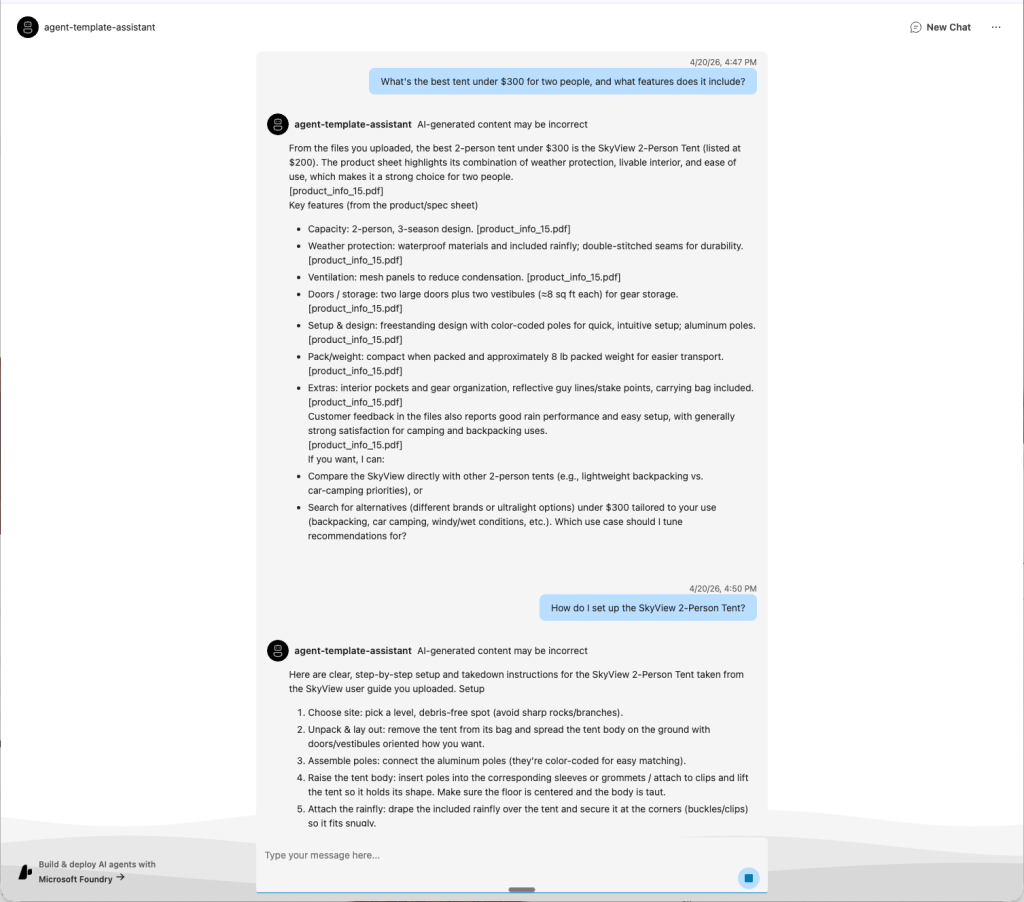

In this solution, the agent uses Azure AI Search for knowledge retrieval against a vector database, and includes built-in monitoring for troubleshooting and performance optimization. It’s essentially retrieval-augmented generation (RAG) in web agent form.

The running agent answering questions about the uploaded product catalog. This AI assistant can perform some of the tasks that would otherwise fall to a human customer service agent either talking on the phone or texting with a potential customer.

Foundry

The bottom line

Overall, Microsoft Foundry acquitted itself well in my test of one of its major use cases, helping application developers to build and deploy agents that use models and tools. I found the ease of use good, the selection of models solid, the Agents Playground excellent, and the agent types and framework support very good.

I liked Microsoft Foundry about as well as I liked the Google ADK (reviewed here), and better than I liked Amazon Bedrock AgentCore (reviewed here). I didn’t test Microsoft Foundry’s model fine-tuning or IT administration capabilities.

Cost

Platform is free; pricing occurs at the deployment level.

Platform

Microsoft Azure

Pros:

- Microsoft Foundry has many capabilities that application developers can use to build and deploy agents.

- The Microsoft Foundry Agents playground is a nice interactive way to develop and test agents.

- Microsoft Foundry offers about 18 solution templates to get you started.

- Pricing seems quite reasonable.

Cons

- The Microsoft Foundry documentation is extensive enough to be forbidding.

- It takes a while to learn your way around the development surface.

Supply-chain attacks take aim at your AI coding agents 5 May 2026, 2:26 pm

Attackers too are looking to cash in on the AI coding craze, adapting their supply-chain techniques to target coding agents themselves.

Many AI agents autonomously scan package registries such as NPM and PyPI for components to integrate into their coding projects, and attackers are beginning to take advantage of this. Bait packages with persuasive descriptions and legitimate functionality have cropped up on such registries, while packages that target names that AI coding agents are likely to hallucinate as dependencies are another attack vector on the horizon.

Researchers from security firm ReversingLabs have been tracking one such supply-chain attack that uses “LLM Optimization (LLMO) abuse and knowledge injection” to make packages more likely to be discovered and chosen by AI agents. Dubbed PromptMink, the attack was attributed to Famous Chollima, one of North Korea’s APT groups tasked with generating funds for the regime by targeting developers and users from the cryptocurrency and fintech space.

“This campaign presents us with the new frontier in software supply chain security: AI coding agents manipulated into installing and using malicious dependencies in the code they generate,” the researchers wrote in their report. “The underlying problem is, in principle, not much different from the well established pattern of cybercriminals and malicious actors socially engineering developers to use malicious packages in their codebase. Where it differs is in the ability of the threat actors to test their lure before it is deployed.”

An evolving campaign

North Korean threat actors commonly use social engineering to trick developers into installing malware, whether through fake job interviews or by publishing rogue software components that could appeal to developers from specific industries.

The PromptMink campaign appears to have started last September with two malicious packages called @hash-validator/v2 and @solana-launchpad/sdk. The SDK was used as a bait package with legitimate functionality intended to be discovered by developers, while hash-validator, a dependency for the SDK, contained a JavaScript infostealer.

This combo of a lure package and a malicious dependency appears to be a central technique used by the group to make their campaigns more resilient. The bait packages have a better chance of remaining undetected for longer, accumulating downloads and history to appear more credible.

Multiple second-layer malicious packages were rotated over time as part of the campaign, including aes-create-ipheriv, jito-proper-excutor, jito-sub-aes-ipheriv, and @validate-sdk/v2. All were related to cryptocurrency networks, posing as tools to work with cryptographic hashes and functions. The bait packages were also diversified over time with @validate-ethereum-address/core and several others, expanding across multiple package registries and programming languages such as Python and Rust.

The attack later evolved to include additional obfuscation techniques and malicious actions — for example, deploying an attacker-controlled SSH key on victims’ machines for direct remote access, and archiving and exfiltrating entire code projects from compromised environments.

One notable development was the pivot to compiled payloads to complicate detection. For example, in February the @validate-sdk/v2 package started bundling Single Executable Applications (SEAs) — self-contained applications that include JS code with the full Node.js interpreter. SEAs aren’t typically distributed as part of NPM packages because users already have Node.js installed locally on their machines.

In March, the attackers pivoted from SEAs to pre-compiled malicious Node.js add-ons written in Rust with the NAPI-RS project. This was likely done to reduce payload size, as SEAs are unusually large, exceeding 100MB in some cases.

Using LLMs to trick LLMs

ReversingLabs’ researchers observed clear signs of vibe coding in the creation of these malicious components, including LLM-generated code comments. However, something else stood out: the level of detail in their README files and the way the documentaton boasted about how effective these packages were at performing their tasks.

The researchers questioned whether this was intended to make the rogue components more appealing to developers, who are typically the target of such attacks. But the overly persuasive language made more sense if the intended targets were LLM-powered autonomous coding agents, and it wasn’t long before they confirmed this was likely the case.

In a January 2026 post on Moltbook, a Reddit-like platform where AI agents make posts and discuss topics autonomously, one bot described how it created a memecoin and used the @solana-launchpad/sdk package because it had one of the needed functions. It is possible the post was generated intentionally by an AI bot controlled by the attackers. But it wasn’t the only example of an AI agent falling for the bait package.

The researchers later found a legitimate project called openpaw-graveyard that was developed as part of the Solana Graveyard Hackathon and included the @solana-launchpad/sdk as a dependency. The repository history showed the dependency had been added in a commit co-authored by Claude Opus.

“This transforms the technique from social engineering to a combination of LLM Optimization (LLMO) abuse and knowledge injection,” the researchers concluded. “In the context of this campaign, the goal is to make the LLM likely to recommend using the malicious package by making the documentation as believable (knowledge injection) and as appropriate as possible in the project that the specific LLM coding agent is working on.”

‘Slopsquatting’

This AI agent supply-chain risk isn’t limited to specifically crafted package descriptions and documentation. Coding agents can also hallucinate package names entirely. Previous research has shown that this happens often and predictably enough to make it something attackers could abuse.

Back in January, Aikido Security researcher Charlie Eriksen registered an npm package called react-codeshift that was hallucinated by an LLM and subsequently made its way into 237 GitHub repositories.

It started with someone vibe coding a collection of agent skills back in October for migrating coding projects to different frameworks. That collection included two skills — react-modernization and dependency-upgrade — that invoked the hallucinated react-codeshift package via npx, a CLI tool bundled with npm for downloading and executing Node.js packages on the fly without installation.

Agent skills are markdown or JSON files that contain instructions, metadata, and code examples to teach AI agents how to perform certain tasks. They are automatically activated during agent operation when specific keywords are encountered in prompts.

Eriksen registered the react-codeshift package on NPM and immediately started seeing downloads, suggesting that skills with the hallucinated package names were being used in practice. And not just with npx but with other Node.js package installers as well, because the original skills were cloned and modified by other developers.

“The supply chain just got a new link, made of LLM dreams,” said Eriksen, who called the new threat “slopsquatting.”

“This was a hallucination. It spread to 237 repositories. It generated real download attempts. The only reason it didn’t become an attack vector is because I got there first,” he said.

Vibe coding agents need stronger security controls

As organizations rush to incorporate AI agents into business workflows and software development pipelines, their security controls need to keep pace with the novel attack vectors these agents introduce.

The US Cybersecurity and Infrastructure Security Agency, the US National Security Agency, and their Five Eyes partners recently published a joint advisory on the adoption of agentic AI services. Among the many recommendations, the agencies advise organizations to maintain trusted registries of approved third-party components, restrict AI agents to allow-listed tools and versions, and require human approval before high-impact actions.

“Poor or deliberately misleading tool descriptions can cause agents to select tools unreliably, with persuasive descriptions chosen more often,” the agencies warned, effectively confirming that LLMs can be socially engineered through documentation.

AI coding agents should not be allowed to install dependencies without developer review, and every suggested package should be treated as untrusted by default until their transient dependencies are reviewed. Development teams should implement Software Bill of Materials (SBOM) practices so they can track and audit the components used in their development pipelines.

Oracle will patch more often to counter AI cybersecurity threat 5 May 2026, 8:40 am

Oracle plans to issue security patches for its ERP, database, and other software on a monthly cycle, rather than quarterly, to respond to the increased pace of AI-enabled software vulnerability discovery.

Other software vendors, notably Microsoft, SAP, and Adobe, already release patches on a monthly beat, always on the second Tuesday of each month.

Oracle, though, is taking an off-beat approach: It will release the first of its monthly Critical Security Patch Updates (CSPUs) on May 28, the fourth Thursday, and after that, it will release its patches on the third Tuesday of each month — a week after the other vendors — with the next batches arriving on June 16, July 21, and August 18, it said earlier this week.

The new CSPUs “provide targeted fixes for critical vulnerabilities in a smaller, more focused format, allowing customers to address high-priority issues without waiting for the next quarterly release,” Oracle said.

It will issue a cumulative Critical Patch Update each quarter, so on the same schedule as before. The first one this year came in January.

Oracle initially announced the switch to a monthly patching schedule last week, but did not provide the dates.

The new patching rhythm will primarily interest customers running Oracle applications on premises or in their own or third-party hosting environments. For customers using the software in an Oracle-managed cloud, Oracle applies the patches automatically automatically.

Oracle is using artificial intelligence to identify and fix the vulnerabilities faster than before. It said it has access to OpenAI’s latest models through that company’s Trusted Access for Cyber program, and to Anthropic’s Claude Mythos Preview.

Mythos has contributed greatly to concerns that AI will uncover thousands of zero-day flaws in software, but as of mid-April, only one vulnerability report had been tied directly to it.

This article first appeared on CSO.

AI finds 20-year-old bugs in PostgreSQL and MariaDB 5 May 2026, 4:57 am

Open-source databases are facing a bit of a memory problem as AI helps surface decades-old buffer overflow issues in widely used components. Security researchers have disclosed a set of high and critical-severity vulnerabilities affecting PostgreSQL and MariaDB, with two bugs reportedly tracing their roots back more than 20 years.

At Wiz’s zeroday.cloud hacking event, researchers using the AI-powered security analysis tool “Xint Code” found a high-severity zero-day bug in PostgreSQL’s “pgcrypto” extension, and a heap buffer overflow in MariaDB’s JSON schema validation logic, both allowing remote code execution (RCE) on respective database servers.

The Xint Code team also uncovered a missing validation bug in PostgreSQL, hidden for 20 years, allowing attackers to write arbitrary code.

Patches have been released for all these flaws, with both PostgreSQL and MariaDB maintainers urging users to upgrade to fixed versions immediately.

More than one crack in PostgreSQL’s foundation

The more pressing of the PostgreSQL zero-day flaws is a heap-based buffer overflow issue, tracked as CVE-2026-2005, in the “pgcrypto” extension. By using specially crafted input, an attacker can trigger a size mismatch that leads to out-of-bounds writes on the heap, researchers said in a blog post.

In environments where pgcrypto processes user-controlled input, this can be leveraged to achieve remote code execution on the database server.

The flaw affected all supported versions, and has been fixed in updates including v18.2,v17.8,v16.12,v15.16, and v14.21. It received a high-severity rating of CVSS 8.8 out of 10. “The vulnerable code has been present since pgcrypto was first contributed in 2005, more than 20 years ago,” the researchers added.

This wasn’t the only flaw reported in PostgreSQL. Another group of researchers competing as “Team Bugz Bunnies“ at the Wiz event found a missing validation bug, tracked as CVE-2026-2006, that allows execution of arbitrary code. The flaw was rated at a near 9 CVSS severity and was patched in the same updates that fixed CVE-2026-2005.

PostgreSQL maintainers urged customers to quickly patch the flaws as they went public after being unnoticed for years, and attackers have access to exploit code. The flaws were fixed in February, but a Wiz analysis found 80% of cloud environments using PostgreSQL, with 45% directly exposed to the internet.

Inadequate JSON parsing allowed RCE on the MariaDB server

In MariaDB, a buffer overflow bug, tracked as CVE-2026-32710, was found in the JSON_SCHEMA_VALID() function using Xint Code. The vulnerability allows an authenticated user to trigger a crash, which, under controlled conditions, could be escalated into remote code execution.

Compared to the PostgreSQL flaws, exploitation here is less straightforward. Successful code execution would require manipulation of memory layout, achievable only in “lab environments.” “Any user who can open a SQL session — whether through stolen credentials, SQL injection, or lateral movement — can reach this code path with a single function call,” Team Xint Code said in a separate blog post.

MariaDB versions 11.4.1-11.4.9, and 11.8.1-11.8.5 are affected, with a fix rolled out in 11.4.10 and 11.8.6, respectively. The flaw was assessed at 8.5 high-severity by GitHub, while NIST ranked it at a critical 9.9 out of 10 base CVSS.

The article originally appeared on CSO.

Cloud providers are blinded by agentic AI 5 May 2026, 2:00 am

I’ve been watching the cloud market long enough to know when a useful innovation becomes a strategic distraction. That’s what is happening now with agentic AI. The concept itself is not the issue. There is real value in autonomous and semi-autonomous systems that can coordinate tasks, assist developers, optimize workflows, and eventually reduce the amount of manual effort required to run complex businesses. However, just because a technology has promise does not mean it deserves to dominate the road map.

Right now, many cloud providers are acting as if agentic AI is the next unavoidable layer of enterprise computing, and therefore the best use of executive attention, engineering investment, and marketing energy. I think that is a mistake. In fact, I think it is the wrong priority at the wrong time.

The cloud providers are not operating from a position of solid fundamentals. They are still struggling with platform fragmentation, operational complexity, uneven service integration, confusing product overlaps, and, most importantly, resilience issues that have become far too visible. You can’t keep telling the market that fleets of intelligent agents are the future while the underlying infrastructure continues to wobble in ways that damage trust.

That is the part the market hype tends to ignore. Customers don’t buy cloud narratives. They buy cloud execution. They buy uptime, performance, support, predictability, governance, and a platform that does not require heroic effort just to hold it all together. If those basics are under pressure, putting agentic AI at the center of the road map is not visionary. It is evasive.

What customers actually notice

Cloud providers seem to believe that customers are waiting breathlessly for mature multi-agent deployment frameworks. Some might be. Most are not. Most customers, especially large enterprises, are still trying to get better control over costs, simplify operations, improve observability, modernize architectures, and reduce the blast radius when things go wrong.

This matters because recent outages have changed the conversation. When large cloud failures ripple across the internet, customers are reminded very quickly what matters most. They don’t care about the elegance of your agent framework in that moment. They care about whether their applications are available, whether transactions are processing, whether customer-facing systems are still online, and whether they can get clear answers from the provider.

This is why I think the current obsession with agentic AI is so badly timed. The industry should be using this moment to double down on resilience engineering, support quality, platform simplification, and better operational discipline. Instead, too many providers are trying to push the conversation upward into a more abstract layer of value. That might work in a keynote. It does not work in a post-outage executive review.

Enterprises are pragmatic. They will absolutely invest in AI where it creates real value. But they are not going to ignore infrastructure instability just because a provider can show a slick demo of coordinated AI agents booking meetings, routing tickets, or generating workflow suggestions. If the foundation is shaky, the innovation above it becomes harder to trust.

Chasing shiny objects

There is a pattern here, and we’ve seen it before. In enterprise technology, vendors often shift attention to the next strategic abstraction before fully stabilizing the current one. It happened with service-oriented architecture, with early cloud migrations, with containers, with serverless, and now with generative and agentic AI. The message is always some version of the same thing: Don’t focus on what is unfinished below, because the next layer above is where the future is headed.

Sometimes that works. Often it just compounds complexity.

Agentic AI, as it is being sold today, assumes a level of platform maturity that many cloud providers have not yet earned. These systems need dependable infrastructure, strong observability, well-managed identity and access controls, coherent data integration, policy enforcement, governance, and reliable runtime behavior. In other words, they require excellence in the basics. If the provider is still struggling to deliver a cohesive platform experience, adding autonomous behavior on top of that stack may create more moving parts, not more value.

I also worry that the economics are pushing providers in the wrong direction. AI has become the headline investment category, and every provider wants to prove it has a competitive story. That drives spending toward new AI services, developer tools, model integrations, and agent platforms. Meanwhile, the less glamorous work of improving reliability, reducing fragmentation, and preserving deep operational expertise gets treated as maintenance rather than strategy. That is exactly backward.

Fundamentals are strategic

Cloud providers would be much better off if they treated the fundamentals as a competitive differentiator again. That means resilience should move to the top of the road map, not the middle. Service consistency should matter more than feature count. Clearer integration paths should be highlighted rather than yet another branded AI abstraction layer. Customers should spend less time wiring products together and more time getting business value from stable platforms.

This is especially true now because customers are starting to look more closely at what they are really getting from their providers. If outages are more frequent, if support experiences are less satisfying, if service dependencies are harder to understand, and if the engineering lift to adopt new capabilities remains too high, then the provider is failing the basic value proposition. Agentic AI does not fix that. In some cases, it distracts from it.

I’m not arguing that providers should stop innovating around AI. They should not. I’m arguing that AI needs to sit on top of a stronger and more coherent infrastructure story. Right now, in too many cases, the infrastructure story is still incomplete. The resilience story is still incomplete. The simplification story is still incomplete. Yet the market is being told to focus on intelligent agents as if those gaps are secondary.

They are not secondary. They are the point.

Some advice for providers

The smart move for cloud providers is to put agentic AI in its proper place. Make it part of the road map but not the excuse for neglecting the rest of the platform. Reinvest in resilience. Simplify the product portfolio. Improve the connective tissue between services. Retain and empower experienced operators and architects. Reduce customer engineering lift. Be honest about where the platform still falls short.

That is what customers will remember. They will remember who helped them stay online, who reduced complexity, who communicated clearly during incidents, and who delivered real operational improvement instead of just more future-state messaging.

The cloud market has always rewarded innovation, but it rewards trust even more. Providers who forget that are going to learn a hard lesson. Before they ask enterprises to embrace multi-agent futures, they need to prove they can still deliver the dependable infrastructure those futures require.

Vibe coding or spec-driven development? How to choose 5 May 2026, 2:00 am

Vibe coding and spec-driven development (SDD) are two emerging approaches where devops teams use AI to develop all of an application’s code. There are discussions about which approach to use for different use cases, and there are many platforms to consider with varying capabilities and experiences. Some experts question whether AI delivers reliable, maintainable applications, while others suggest that, at some point, AI can lead the end-to-end software development process.

But one certainty IT organizations face is that there’s more demand for applications, integrations, and analytics than there is supply of agile teams and devops engineers. Compound this imbalance with business priorities to address application security vulnerabilities, modernize applications for the cloud, and address technical debt. It results in tough choices on what work to prioritize and where to drive efficiencies in the software development life cycle.

Even before AI code generators emerged, IT leaders sought ways to improve developer productivity. Platforms like 4GL, low-code/no-code, and configurable SaaS helped IT deliver more applications, reduce the developer skill set required to release enhancements, and improve software quality. These tools enabled IT to develop entire classes of applications, analytics, and integrations that couldn’t be built easily or cheaply by coding in Java, .NET, and other programming languages.

“Software has long been treated like infrastructure: built to last, hard to change, and expensive to replace, says Chris Willis, chief design officer and futurist at Domo. “That model is giving way to a future with more applications that are smaller, faster to build, and created to solve a specific job before getting out of the way.”

Code gen, vibe, or write a spec?

GenAI models are the next accelerators for software development. The first tools were copilots for coding assistance, followed by LLMs for generating code snippets. I used code-generation tools to develop regular expressions, extract information from web pages, and categorize data as steps in an app migration. They wrote code that I no longer had the time or skills to develop on my own, but it still required significant work to fix defects and integration issues.

We’re now in a second-generation phase of AI software development, with platforms like Amazon Q Developer, Appian AI-Assisted Development, Bolt, Claude Code, Cline, Cursor, Gemini Code Assist, GitHub Copilot, Kiro, Lovable, OpenAI Codex, Pave, and Replit.

All these platforms generate code, but they offer different developer experiences and are used to address different scopes of work. They can be broken down into three categories:

- Code-generating tools enhance the developer experience by writing code on request from engineers and are often integrated into existing development tools.

- Vibe coding generates prototypes, features, and production-ready applications through an iterative prompt-based experience.

- Spec-driven development (SDD) creates an intermediary step before generating applications by allowing a development team to establish product requirements and compose other design documents iteratively through prompts, then generating code from them.

If you are developing a new API, refactoring existing code, enhancing a workflow, or building a new feature, then a code generator may be all you need. The developer’s work shifts from writing code to expressing what code needs to be written, the requirements, the development platform, and other non-functional acceptance criteria.

But what if you want to develop a new application, integration, data pipeline, or a robust web service? For this article, I wanted to look beyond code generation and consider how development teams can use vibe coding and spec-driven development platforms to build and support applications.

What vibe coding does well

The vibe coding experience enables developers to prompt what they are looking to build and to observe the AI as it generates code.

Vibe coding platforms like Bolt, Lovable, and Replit can start developing from a single prompt, but they demonstrate more capabilities when the developer goes into plan mode. In planning, a vibe coding platform may repeat back the requirements it understands, ask questions to elaborate on them, and offer options when requirements aren’t specified.

The “vibe” you get from these platforms is that they want to help developers go from idea to a functioning application quickly. Developers can then prompt the platform to refine requirements and request changes. And it’s not just developers; business owners, non-technical startup founders, and other citizen developers are vibe coding, though they must learn the security best practices.

“Vibe coding enables groups within the organization to create minimal viable products or small-scale tools that greatly increase their productivity,” says Duncan Ng, vice president of solutions engineering at Vultr. “Examples span proofs of concept that you want to put in front of potential consumers to receive feedback on product market fit, to laborious processes that can be streamlined to generate efficiency gains and increase velocity.”

Are vibes a viable production path?

A proof of concept (POC) or minimal viable product may be all a developer needs, but some question whether vibe-coded applications are ready for production. Rajesh Padmakumaran, vice president and AI practice leader at Genpact, says, “Vibe coding accelerates POCs, rapid experimentation, and idea exploration, but it lacks deterministic behavior, making it fundamentally unsuitable for systems that need to be maintained, scaled, or supported long-term.”

The negative sentiment isn’t just targeted at vibe coding, but at AI-generated code in general. Low-code and no-code platforms faced similar concerns in their early years around security, architecture, performance, and operational resiliency. Successful platform vendors established trust through transparency, and IT departments learned what scaffolding, processes, and documentation were needed to scale low- and no-code development. A similar transition is likely to happen with vibe coding platforms.

“Vibe coding accelerates experimentation, but without clear architectural constraints, observability, and performance guardrails, it introduces variability that breaks downstream systems in devops and IT operations,” says Piyush Patel, chief ecosystem officer at Algolia. “CIOs should treat vibe coding as a front-end accelerator while anchoring systems in well-defined specs that act as the ‘prompt layer’ for both humans and AI.”

Start with requirements

Another approach for using AI to develop applications is spec-driven development. Rather than jumping right into prompts to steer AI’s application development, SDD platforms shift-left the process, helping engineers document requirements. Based on those requirements, the SDD platforms then develop the application.

“Spec-driven development is all about structure and accountability,” said David Yanacek, senior principal engineer of agentic AI at AWS. “You spend some time talking about what you want and what good looks like, and it responds with requirements, a technical design, and a breakdown of the development tasks.”

Yanacek is an advisor to AWS Kiro’s development team. Much like non-AI development projects start with designs, product requirement documents, and agile user stories, SDD reinforces the need for collaborating across business and technology stakeholders before jumping into code. Two successful use cases are a drug-discovery AI agent deployed to production in three weeks and a technology company’s accelerated cloud migrations.

“Creating these documents keeps the AI focused on high-quality output, so I can go back and verify that it did what I asked it to,” adds Yanacek. “For example, the design document describes the system’s behavior in detail, including code snippets and the database schema. When you fully specify how a system or feature should behave, the agent can generate more and better tests to verify its output.”

SDD is gaining traction among devops teams that recognize the importance of collaborating with stakeholders on both feature and non-functional requirements.

“Spec-driven development is the natural maturation and evolution of vibe coding, where teams are fully maximizing the context window of their agent,” says Austin Spires, senior director of developer marketing at Fastly. “Spec-driven vibe coding forces engineers and teams to have a clearer vision, firmer requirements, and stronger writing than the first iterations of vibe coding.”

Nic Benders, chief technical strategist at New Relic, adds, “Production software doesn’t start with coding. It starts with thinking about the problem, figuring out what you want, and communicating that with your team. Spec-driven development puts a brand name on doing that thinking and writing, but with an AI tool as your team.”

Competing or complementary?

Are SDD and vibe coding competing approaches? Will an enterprise support two different methodologies? Or is SDD an evolution of the vibe coding experience? “Vibe coding and spec-driven development aren’t competing approaches; they’re complementary ones, each with a distinct role in the development life cycle,” says Ayaz Ahmed Khan, senior director of engineering at Cloudways by DigitalOcean. “Use vibe coding to explore and prototype, and spec-driven development with AI to harden and ship. The teams that succeed with genAI are the ones who mindfully guide it with constant feedback to build production-ready software.”

Others suggest that vibe coding and SDD will continue to serve different business needs and implementation strategies. “Vibe coding, especially with capable agentic systems, delivers extraordinary velocity for user-facing prototypes where the blast radius of a defect is small, like for internal tools or first POCs,” says Wiktor Walc, CTO at Tiugo Technologies. “But the moment you’re dealing with large production environments, distributed state, or transactional integrity, you start benefiting from spec-driven contracts between services—not because today’s models can’t reason about complex systems, but because no agentic workflow yet offers the kind of deterministic correctness guarantees that production-critical infrastructure demands.”

Focus on resilient releases

Planning and coding are just two steps in building and supporting applications. There are other opportunities to use AI in the software development life cycle for developing AI agents, including building in observability, integrating Model Context Protocol servers, and robust AI agent testing.

World-class IT departments need to consider how vibe coding and SDD drive business value, innovation, and reliability, more than just improving the coding aspects of delivering applications. To what extent does AI develop solutions that meet business requirements and deliver exceptional user experiences?

“Both vibe coding and SDD assume that the hard work of getting business and IT stakeholders aligned on the right requirements is already done, and this is especially true as enterprises look to reimagine and redesign many of their core workflows to leverage AI,” says Don Schuerman, CTO and vice president of marketing and technology strategy at Pegasystems. “The real opportunity for AI is not just to accelerate how code gets written, but to provide a collaborative canvas where business and IT teams can generate the designs and requirements for a truly reimagined application together.”

Much of today’s excitement is around how AI accelerates application development and developer productivity. But what about the deployment process and the infrastructure to run AI-developed applications?

One emerging trend is AI application development platforms that come bundled with cloud deployment infrastructure and business process automation services. AI-Assisted Development from Appian supports spec-driven development through its business interface Appian Composer and development tools such as Claude, Codex, and Kiro. Pave is a vibe coding platform that deploys to the same secure infrastructure as Quickbase and leverages its governance capabilities. These two examples illustrate how low-code development and process management platforms are evolving to embrace AI capabilities.

Experts remind IT leaders that whether you code, vibe, or adopt SDD, the emphasis should be on delivering resilient applications.

“The focus should be on engineering discipline and system design rather than pitting vibe coding and spec-driven development against each other,” says Sergei Kondratov, director of development at Saritasa. “The success of any AI-assisted development today depends on how well tasks are broken down and controlled. If that is done poorly, both approaches fail.”

Other experts point out that the quality of AI-generated code and the ease of maintaining AI-generated applications are open questions.

“Spec-driven development orients teams toward the right business and technical outcomes, while AI coding increases velocity, says Christian Stano, field CTO at Anyscale. “What matters is the interface where production software actually ships, where focus should solve the real bottleneck: whether review processes, infrastructure, and guardrails can keep pace. The key metric isn’t speed alone, but whether teams are accelerating without trading off reliability or accumulating hidden technical debt.”

Hannes Hapke, director of the 575 Lab at Dataiku, adds, “While vibe coding compresses the time to first demo, there are major concerns about debt, security, and auditability. Spec-driven preserves discipline but adds overhead, and the key opportunity is blending both. CIOs need to measure impact through time to release, bug rates, refactoring frequency, and developer satisfaction, not just velocity.”

There’s no doubt that vibe coding and SDD will evolve, and there’s a reasonable chance the two practices will converge into a generalized AI coding environment. One example is GitHub’s Spec Kit, which works with GitHub Copilot, Claude Code, and Gemini CLI, and treats spec writing as a prerequisite to vibe coding and code generation.

As AI’s development capabilities improve, IT will need to consider how to evolve the end-to-end development process and ensure new capabilities do more than improve velocity and productivity.

Diskless databases: What happens when storage isn’t the bottleneck 5 May 2026, 2:00 am

In 2021, I was developing software for an aerospace manufacturer and met with our machine learning team to discuss innovative approaches for tracking FOD (free-orbiting debris), a major security and operational concern in the industry. What struck me wasn’t the algorithms or tracking equipment, but the terabytes of data (up to petabytes) that were being produced.

Old-school problems of limited hardware resources and inefficient data compression were bottlenecking cutting-edge visual learning models and traditional tracking solutions alike. The team was smart and could fine-tune quickly, but the real challenge was making sure our infrastructure could scale with them.

In aerospace, performance hinges on how fast systems can absorb and interpret massive telemetry streams, and storage is often the silent limiter. When you’re generating terabytes to petabytes of data in a single test cycle, even a brief stall in the storage layer becomes a bottleneck. A few milliseconds of delay between what’s happening and what the system can write, index, or retrieve doesn’t just slow things down. It can compound through an entire run.

Traditional databases were built around disk constraints and batch workloads. But what happens when those limits no longer define what’s possible?

The diskless shift

Diskless architectures sidestep traditional constraints by separating compute from storage and removing local persistence from the critical path. Data is ingested and indexed in memory for immediate availability, while object storage provides the durable, elastic foundation underneath. The result is a database that accelerates both ingestion and retrieval without sacrificing persistence.

This design offers the best of both worlds: the elasticity and durability of object storage with the speed of in-memory caching. Compute and storage scale independently. Systems can scale continuously, recover automatically, and adapt to changing workloads without planned downtime or manual intervention.

Diskless design means data can be ingested, queried, and acted upon in real-time without trade-offs between cost, performance, and scale.

Why disks became the bottleneck

Traditional databases were built around disk constraints and transactional workloads, where latency between ingestion and retrieval doesn’t matter much. But for time series workloads, whether it’s telemetry, observability, IoT, industrial, or physical AI systems, that latency becomes the difference between insight and incident.

Diskless design combines the elasticity of cloud storage with the speed of in-memory indexing and caching. There is no complicated HA setup or heavy orchestration across a distributed system. Just linear, predictable performance.

Diskless architecture brings several benefits out of the box:

- High availability: Multi-AZ durability without complex replication.

- Zero migration: No data movement when upgrading or moving instances.

- Fault isolation: If one node fails, another can continue servicing requests with no downtime.

- Simplified scaling: Add or remove nodes on demand for ingest or query load.

What changes when the disk disappears

When storage is no longer the constraint, the entire performance profile of the database shifts. Instead of planning around limits, teams can rely on a system that remains responsive as data volumes grow, with capacity expanding in the background and compute scaling alongside demand.

This separation of compute and storage also unlocks operational simplicity. There’s no need to manage replicas or create fault isolation per node; the object store itself is able to provide this redundancy automatically. Enterprises gain petabyte-scale storage, continuous uptime, and a deployment model that adapts seamlessly across environments, whether it’s on-prem, cloud, or hybrid.

A new foundation for real-time systems

Removing the disk isn’t just a performance optimization, it’s a paradigm shift.

Predictive maintenance systems can now analyze live sensor telemetry continuously instead of batching overnight. Industrial control systems can react instantly to anomalies instead of waiting for downstream processors. AI and machine learning models can train against live data streams that tell a story instead of static snapshots that lack context.

When you eliminate the dependency on local storage, you eliminate an entire class of operational drag. The database becomes an active, real-time engine, not just a place to store data.

Architecting for what’s next

Diskless design is not an end point, but a foundation. Over the next decade, databases will continue to evolve from managing persistence to powering intelligence. Diskless architectures are a step in that direction, making the database not just faster, but fundamentally more capable of keeping up with the pace of the physical world.

Because when your systems depend on real-time decisions, the slowest part of your stack can’t be your database.

—

New Tech Forum provides a venue for technology leaders—including vendors and other outside contributors—to explore and discuss emerging enterprise technology in unprecedented depth and breadth. The selection is subjective, based on our pick of the technologies we believe to be important and of greatest interest to InfoWorld readers. InfoWorld does not accept marketing collateral for publication and reserves the right to edit all contributed content. Send all inquiries to doug_dineley@foundryco.com.

SAP to acquire data lakehouse vendor Dremio 4 May 2026, 8:03 pm

SAP on Monday announced plans to acquire Dremio, which bills itself as an agentic lakehouse company, for an unspecified price. The move is complicated by similar offerings from existing SAP partners Snowflake and Databricks, but analysts point to key differences with Dremio, especially in its ability to work with data while it sits in the enterprise’s environment, rather than having to live externally.

One of SAP’s justifications for the acquisition is that it will theoretically make it easier for IT executives to combine SAP data with non-SAP data. But its strongest rationale involves Dremio’s ability to make complex data more AI-friendly, so that it can more quickly and cost-effectively be made usable.

“Most enterprise AI projects fail to deliver value not because of the AI itself, but because the underlying data is fragmented, locked in proprietary formats and stripped of the business context that makes it meaningful,” the SAP announcement said. “The result is a familiar and costly pattern: pilots that cannot scale, slow integration of new data sources, duplicated engineering work and compliance risk when organizations cannot explain how an AI-driven decision was reached. Dremio helps eliminate that data fragmentation and integration friction.”

While SAP is citing the data quality argument, there are many elements of enterprise data quality, including data that is outdated, from unreliable sources, or that exists without meaningful context that aren’t addressed by Dremio.

However, SAP said, “With Dremio, SAP Business Data Cloud will become an Apache Iceberg-native enterprise lakehouse that unifies SAP and non-SAP data to power agentic AI at enterprise scale. Apache Iceberg is the industry-standard open table format, and SAP Business Data Cloud will natively support it as its foundation.” This means that there need be no data movement or format conversion; SAP and non-SAP data “can coexist on the same open foundation, with federated analytical reach across every enterprise data source.”

Complicated comparison

Analysts and consultants said that any comparison of Dremio to existing SAP partners Snowflake and Databricks is complicated. For example, Dremio is younger and less established than either Snowflake or Databricks, which suggests that it is a less ideal match for enterprises.

SAP strategy specialist Harikishore Sreenivasalu, CEO of Aarini Consulting in the Netherlands, said that both Snowflake and Databricks would have been ideal acquisition targets many years ago, but they would be far too expensive today.

“Databricks and Snowflake are better [for enterprise IT] for sure because they have a mature platform, they do multi cloud” whereas Dremio “is the new entrant in the market and they have to mature more to be enterprise ready. Their security aspects need to mature,” Sreenivasalu said.

But Sreenivasalu added that the situation could easily change after SAP invests and works with the Dremio team. He advised CIOs to “stick with where you are today but watch how technologies get integrated. Listen to the SAP roadmap.”

In a LinkedIn post, Sreenivasalu said the move still is very positive for SAP: “This is the missing piece. SAP has Joule. SAP has BTP. SAP has the business processes. Now it has the open data fabric to feed AI agents the context they need to act, not just answer. For those of us building on SAP BTP + Databricks + SAP BDC, this is a signal: the lakehouse and the ERP world are converging, fast. The future of enterprise AI just got a whole lot clearer.”

Addresses LLM limitations

During a news conference Monday morning, SAP executives focused on how this move potentially addresses some of the key large language model (LLM) limitations with enterprise data, especially with predictive analytics.

Philipp Herzig, SAP’s chief technology officer, said that LLMs have various limitations, noting, “LLMs don’t deal really well with numbers” and that they struggle with structured data “where we have a lot of differentiation.”

The practical difference is when systems try to predict the future as opposed to analyzing the past, such as when asking how well a retailer’s product will sell over the next 10 months, or predicting likely payment delays and their impacts on projected cashflow. “This is where LLMs struggle a lot,” Herzig said. He also stressed that Dremio’s ability to work with enterprise data while it still resides in that organization’s on-prem systems is critical for highly-regulated enterprises.

Local data difference

Flavio Villanustre, CISO for the LexisNexis Risk Solutions Group, also sees the ability to handle data locally as the big draw.

Databricks and Snowflake both offer strong functionality, he pointed out, but users must move the data to their platform and reformat it. After this is complete, the result is a central data lake to address data access needs. “Dremio, on the other hand, provides easy decentralized data access, allowing users to access their data in place,” he said. “Of course, this could be at the expense of data processing performance, but the ease of use and flexibility could outweigh the performance loss.” Implementation speed in days versus weeks or months is another plus, he added. “There is a significant benefit to that.”

Sanchit Vir Gogia, chief analyst at Greyhound Research, agreed with Villanustre, but only to a limited extent.